All posts tagged in Vision .

- Home

- Vision

Robots in unstructured spaces, such as homes, have struggled to generalize across unpredictable settings. In this context, a few projects stand out more than the rest – for example, the Everyday Robot project at Google X and Figure’s 01 home robot. After being tied to

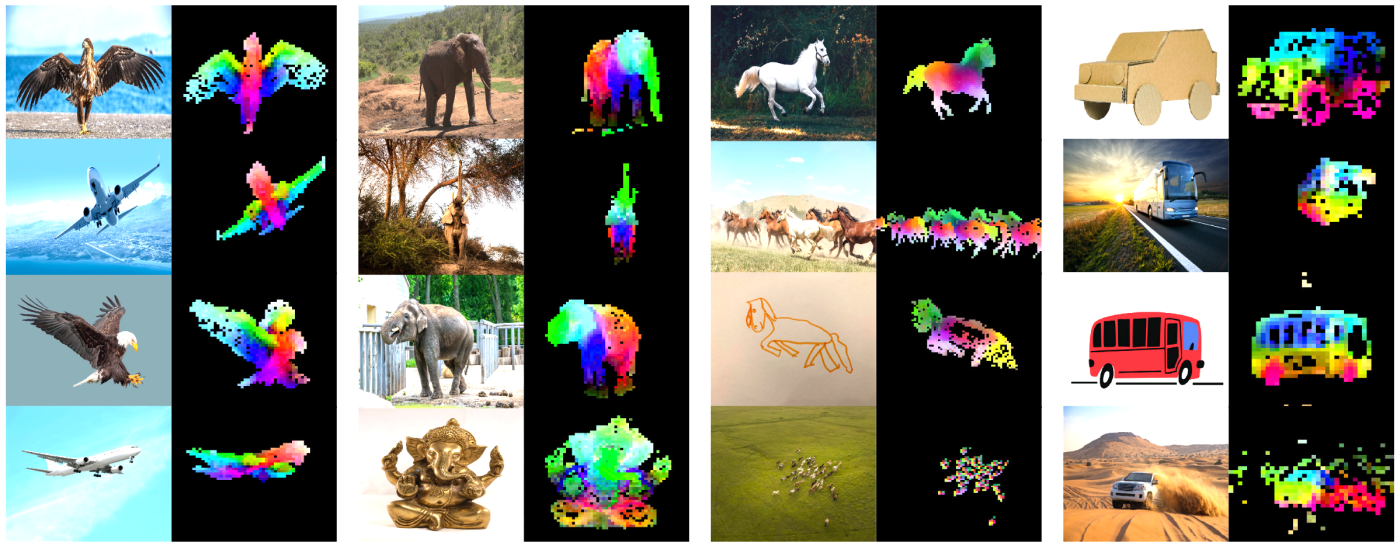

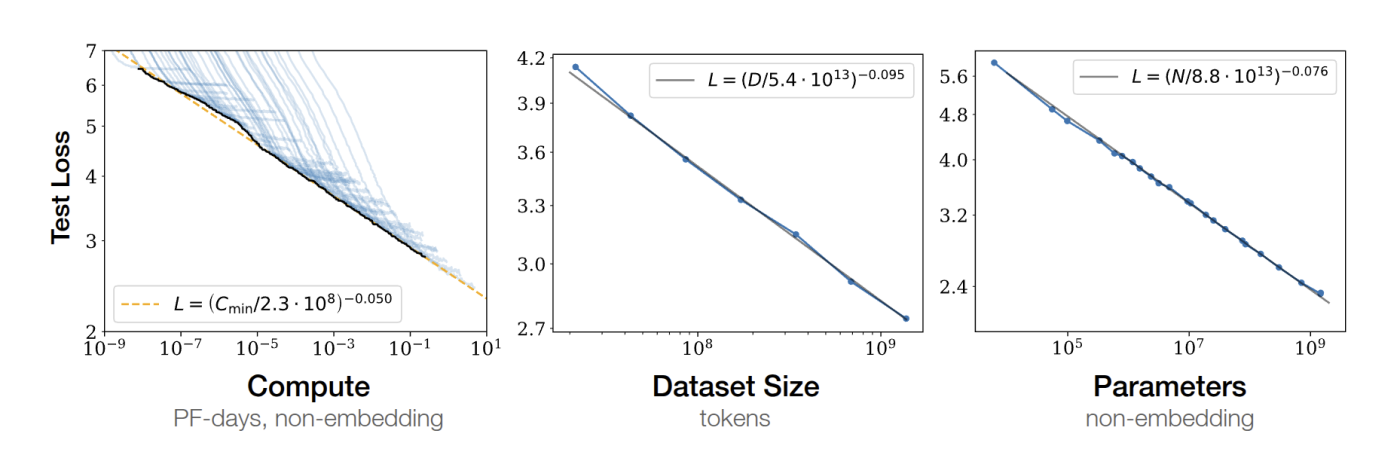

Let’s talk about DINOv2, a paper that takes a major leap forward in the quest for general-purpose visual features in computer vision. Inspired by the success of large-scale self-supervised models in NLP (think GPTs and T5), the authors at Meta have built a visual foundation

Hey folks! I’m thrilled to share with you a research paper that I’ve been working on, diving deep into the fascinating realm of vision-based navigation for indoor robots. In my research paper titled “Real-time Vision-based Navigation for a Robot in an Indoor Environment”, I explore

Categories

Sagar Manglani

manglanisagar@gmail.com

Blogger

I write articles on computer vision and robotics.