Robots in unstructured spaces, such as homes, have struggled to generalize across unpredictable settings. In this context, a few projects stand out more than the rest – for example, the Everyday Robot project at Google X and Figure’s 01 home robot. After being tied to OpenAI for a while, Figure has finally decided to build its own AI model, called Helix. With Helix, Figure introduces a Vision-Language-Action (VLA) model that attempts to bridge the gap between robots operating in structured and unstructured environments, offering a unified system for humanoid robot control. As an external observer assessing Helix’s claims with academic rigor, let’s explore what this system brings to the table and where challenges may remain.

A New Paradigm for Humanoid Robotics

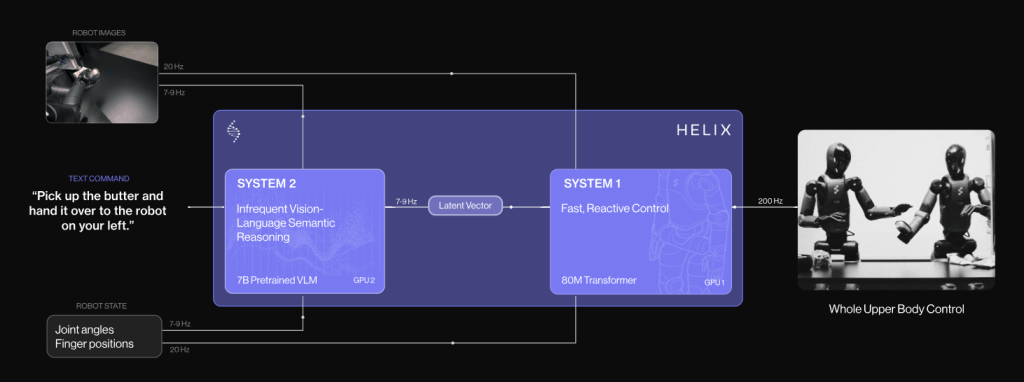

Helix is positioned as a first-of-its-kind VLA model capable of full upper-body control in humanoid robots. The system integrates perception, language comprehension, and learned control, enabling a robot to respond dynamically to diverse real-world scenarios. Unlike previous approaches that required extensive task-specific fine-tuning, Helix operates with a single set of neural network weights to handle a broad range of tasks, from simple object grasping to multi-robot collaborative behaviors.

To bring robots into homes, we need a step change in capabilities. Helix can generalize to virtually any household item.

Brett Adcock, Founder, Figure AI

The core innovations of Helix include:

Whole-body dexterity: Helix outputs continuous high-rate control for the entire humanoid upper body, encompassing wrists, fingers, the head, and the torso.

Multi-robot interaction: It facilitates coordinated tasks between multiple robots, a crucial capability for real-world deployment.

Zero-shot object handling: The system generalizes across thousands of unseen household items, manipulating them based on natural language commands without requiring explicit retraining.

Scaling Challenges in Household Robotics

Bringing robots into the home is an entirely different challenge from automating industrial processes. Household environments are unpredictable, requiring a robot to handle everything from delicate glassware to loosely crumpled clothing. Traditional heuristic-based programming and even imitation learning approaches demand extensive human effort, making them impractical at scale.

Helix’s approach attempts to circumvent these bottlenecks by leveraging the language capabilities of large-scale Vision Language Models (VLMs) for zero-shot generalization. By translating abstract, high-level commands into real-time motor control, it represents a step toward making household robotics more feasible.

Similar efforts to scale robot capabilities have emerged from projects like Mobile ALOHA (Mobile ALOHA Project), a teleoperation-based system that collects diverse human demonstrations to improve bimanual manipulation. Meanwhile, OpenVLA (OpenVLA Paper) focuses on open-source vision-language-action models trained on extensive robot demonstrations, making them more adaptable for research and commercial use. Helix builds upon these prior approaches by integrating both robust perception and high-frequency real-time control into a unified framework.

However, there are still unanswered questions:

- How does the system handle ambiguous or conflicting natural language commands

- What failure cases emerge when moving beyond controlled testing environments?

- Can this maintain long-term reliability across varied environments?

The "System 1, System 2" Approach

A particularly interesting aspect of Helix is its dual-system architecture:

- System 2 (S2): A vision-language model trained on internet-scale data that operates at ~7-9 Hz to interpret high-level semantic goals.

- System 1 (S1): A fast visuomotor policy running at 200 Hz, translating S2’s high-level representations into real-time, continuous robot actions.

Results and Open Questions

Helix represents an ambitious leap toward general-purpose humanoid robotics. By integrating Vision-Language Models with real-time motor control, Figure has demonstrated a system capable of performing flexible, high-dimensional tasks with minimal task-specific supervision.

If Helix can continue to refine its approach and withstand real-world challenges, it could mark a significant shift in how robots interact with their surroundings – potentially making household robotics a reality sooner than expected.

For now, Helix stands as a promising research direction.